Why are there dogs and eyeballs in my succulents?

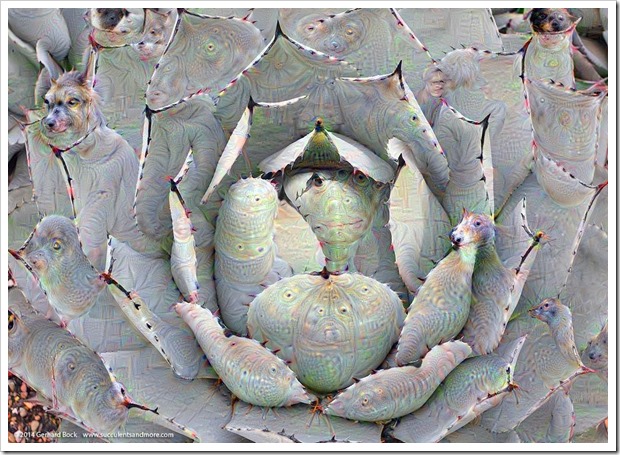

Imagine waking up in the middle of the night with this image fresh in your head:

At best you feel uneasy. At worst you're afraid of going back to sleep.

Why is it that we find this image so disturbing? Probably because disembodied eyeballs and creepy dog faces are not part of our reality.

Computers don't see it that way. Eyeballs, dog faces, cacti or agaves, it's all about shapes and patterns to them--one no more or less strange than the other.

NOTE: Please click on each image to see a larger version that shows much more detail.

As part of an ambitious project designed to improve object recognition in photos (code-named “Deep Dream”), Google trained an artificial neural network by feeding it a massive collection of 1.2 million images, each of them precisely classified as to what it represented (bird, building, dog, etc.).

After Deep Dream had “learned” these shapes, Google tested it by giving it new images to classify, i.e. to determine whether a photo was of a bird, building, dog, etc. As part of this process, Deep Dream was asked to visualize the patterns it “saw” in images so the developers could get a better understanding of what was going on in the various layers of the neutral network. This is where things got interesting. Deep Dream output bizarre creations—disembodied dog heads, reptilian legs, floating pagodas—attached to or embedded in the shapes that existed in the actual photo.

To us it may seem like a nightmare from the mind of Hieronymus Bosch, but to Deep Dream it’s just how it learns. And since Deep Dream must have been fed a disproportionally high number of dog and bird photos as part of its training, dog and bird heads—or parts thereof—show up in virtually every image “dreamt” up by the engine.

This short video describes the process much better than I can. This post from the Google Research Blog covers the technical background if you’re interested.

A few weeks ago Google made some of the Deep Dream code available as open source. Several web sites have since popped up that allow you to submit your own photos for Deep Dream processing. Needless to say I was curious to see what Deep Dream would do to my own photos so I uploaded some to http://deepdreams.zainshah.net/ (other sites are listed here).

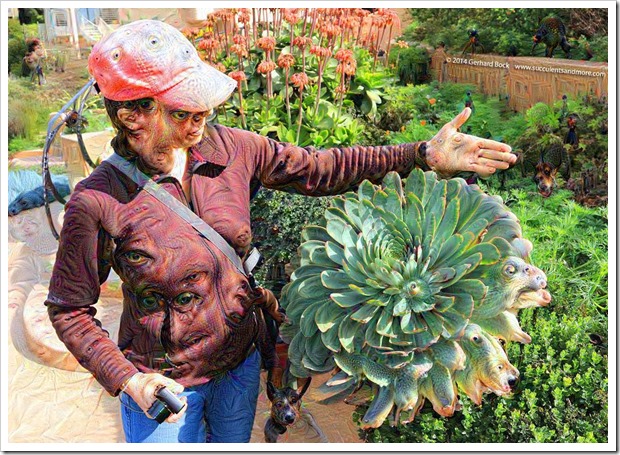

The results are as bizarre as I had expected, sometimes even more so. On human faces the effects are even more unsettling because our tolerance of what is "normal" is so narrow. A quick Google search will produce scores of Deep Dream images of people, including politicians, celebrities and folks like you and me. This selfie of yours truly is a good example:

As interesting as all of this is in the abstract, you might be wondering what this has to do with plants and gardening.

Not much at the moment, but potentially a lot down the line.

As object recognition advances thanks to projects like Google Deep Dream, software programs of the future will be able to analyze the photos you take and classify them according to what they show: plants, buildings, people. Taking it a step further, the software may even be able to determine that a photo is of an agave, an aloe, or cactus—and possibly even of which species.

Imagine how handy it will be to tell your computer, or smartphone, or whatever else we may be using then, to search through your thousands of photos and pull those that show a saguaro cactus at sunset, or an agave in a red container, or a flowering water lily, or your sleeping dog. The search results will be based on the actual image content, analyzed and recognized by software, instead of text keywords you laboriously entered yourself (the only way this kind of search could be done in the past).

Or imagine taking a photo of a plant you don’t recognize and asking your computer to identify it? Like reverse image search in Google Images, but delivering infinitely more useful and accurate results.

All of that will be possible someday.

And maybe androids will dream of electric sheep after all.

———————————————————

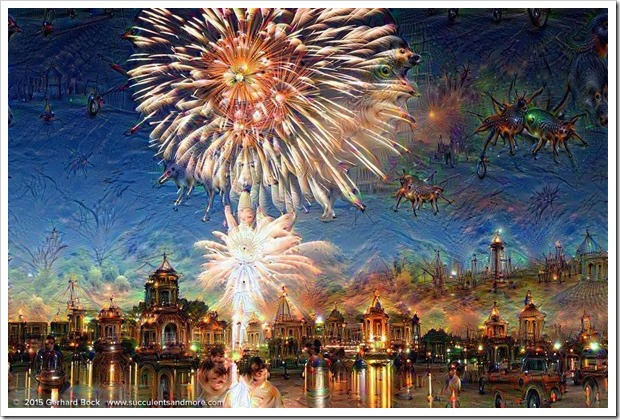

The results are particularly mesmerizing on photos with lots of sky or even-toned blank space:

Picacho Peak State Park, Arizona

Mesquite tree

Our house in the fog

Bamboo in the front yard in the fog

Canada Day fireworks, Victoria, BC

I love it! So fabulous. Thankyouthankyouthankyou. And to think that it was done by a computer! I'll never see an agave the same way again. There's a program called Merlin being developed that will help you ID a bird. Works on the same principle I think. Great stuff--computer geeks using technology for actually having fun! Remember Easter Eggs?

ReplyDeleteI*m glad you enjoyed it, Jane. I think image recognition will revolutionize life, and yet it's still under the radar of most people.

DeleteEaster Eggs! I haven't heard THAT word in a long time. I was crazy into Easter Eggs, scouring the web (in its infancy then) for clues on where to find them in software programs and DVDs.

Some are fascinating, some would make great prints, some clever, some creepy! I enjoyed the variety you have shown Gerhard!

ReplyDeleteI also thought that some would make fantastic prints. Unfortunately, the image size at the site I tried (http://deepdreams.zainshah.net/) is limited to 1048 px in the longest dimension. That's not good enough for large prints. But I bet we'll see more sites that allow access to the Deep Dream code--and at higher resolutions to boot.

DeleteFascinating! I tried a sunflower photo using another app as the one you used wasn't responding this afternoon. Mine wasn't as creepy as the dogs and eyeballs but it was fun.

ReplyDeleteThe sites I tried a quite overloaded at the moment. This one worked the best:

Deletehttp://deepdreams.zainshah.net/

After you upload your file, it still takes 10-15 minutes for the altered images to display. Just leave the page open and do something else while it's processing.

I kept seeing Aloe mite damage. And your home has been invaded by Muchkins from Mars. My dreams tonight will be strange.

ReplyDeleteLOL, some of the imaginary critters look like they could do all kinds of damage to succulents :-).

DeleteThis is really great -- creepy, but fascinating! Beautiful images too.

ReplyDeleteI'm surprised it's not seeing cats everywhere...

I figured you would understand the technical background of Deep Dream better than anybody else I know.

DeleteNow that you said it, the absence of cats is surprising. Maybe the eyes are from cats??

Those images are really beautiful and disturbing pretty much in equal measure. But mesmerising at the same time.

ReplyDeleteMesmerizing is the perfect word. I keep wanting to create more, but the site I used for the images shown in this post (deepdreams.zainshah.net) is down now. It probably received too many requests...

DeleteThese images are incredibly cool!

ReplyDelete